EU AI Act Compliance for Agentic Systems

Meeting High-Risk AI Requirements

Heath Emerson, MBA — Founder & CEO

February 2026 | apotheon.ai

Download Full PDF

Get the complete whitepaper with references and citations

Executive Summary

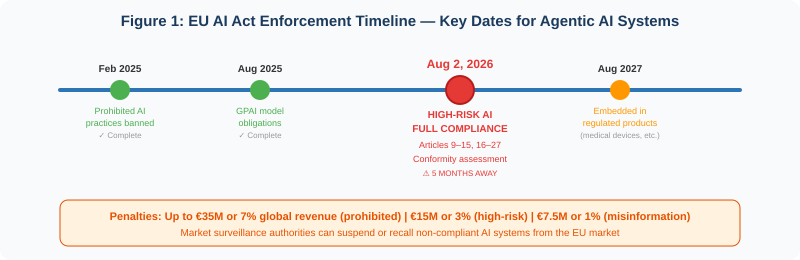

The EU AI Act (Regulation (EU) 2024/1689) enters full enforcement for high-risk AI systems on August 2, 2026. This is the first comprehensive AI regulation in the world, and its extraterritorial reach—applying to any organization whose AI systems are used within the EU or produce outputs affecting EU residents—means that compliance is a global requirement, not a regional one. Penalties for non-compliance reach €35 million or 7% of global annual turnover for prohibited practices, and €15 million or 3% for high-risk system violations.

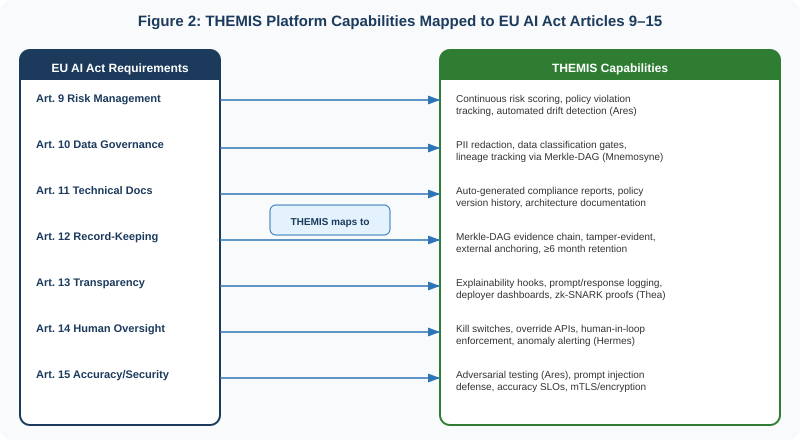

Agentic AI systems—autonomous agents that reason, plan, and execute multi-step workflows—present unique compliance challenges that the Act’s framers did not fully anticipate. Agents spawn sub-agents, invoke external tools, make real-time decisions, and operate with degrees of autonomy that strain conventional governance models. Yet the Act’s requirements—risk management (Article 9), data governance (Article 10), technical documentation (Article 11), record-keeping (Article 12), transparency (Article 13), human oversight (Article 14), and accuracy/security (Article 15)—apply in full.

This paper maps each of these requirements to the specific capabilities of Apotheon.ai’s THEMIS governance runtime and the broader AIOS platform, demonstrating how organizations deploying agentic AI systems can achieve compliance without building governance infrastructure from scratch. A downloadable compliance checklist accompanies this paper, providing a practical tool for compliance teams to track their readiness against each Article requirement.

Why Agentic AI Systems Require Special Compliance Attention

The EU AI Act was drafted primarily with conventional AI systems in mind—models that receive inputs and produce outputs within a bounded scope. Agentic AI systems challenge this model in several fundamental ways that compliance teams must address explicitly.

Autonomy and cascading actions. An agentic system does not simply answer a question. It formulates plans, executes multi-step workflows, invokes tools, and adapts based on intermediate results. A single user request can trigger dozens of agent actions, each of which may process personal data, make decisions affecting individuals, or modify external systems. Each action is a compliance event under the Act.

Sub-agent spawning. Many agentic frameworks allow agents to delegate tasks to sub-agents. The parent agent’s intended purpose may differ from the sub-agent’s actual behavior. Risk management (Article 9) must account for emergent behavior across the agent hierarchy, not just the top-level agent.

Tool integration uncertainty. Agents that invoke external tools—APIs, databases, search engines, code execution environments—introduce risk vectors that extend beyond the AI model itself. Data governance (Article 10) must cover data accessed through tools, not just training data.

Temporal complexity. Conventional AI systems produce discrete outputs. Agentic systems operate over time—maintaining state, remembering context, accumulating knowledge. Record-keeping (Article 12) must capture not just individual decisions but the decision chain across an entire session or workflow.

Non-determinism. Language model outputs are stochastic. The same agent with the same input may produce different action sequences on different runs. Accuracy and robustness (Article 15) must be defined probabilistically, not deterministically.

The EU AI Act does not exempt agentic systems from high-risk requirements. It applies to any AI system whose intended purpose falls under Annex III—regardless of the system’s architecture. The burden of proving compliance falls on the provider.

Article-by-Article Compliance Mapping

The following section maps each high-risk requirement (Articles 9–15) to the specific THEMIS and AIOS platform capabilities that address it. For each article, we describe the regulatory requirement, the agentic-specific challenge, and the THEMIS implementation.

Article 9: Risk Management System

Requirement: Providers must establish, implement, document, and maintain a risk management system throughout the AI system’s lifecycle. This includes identifying and analyzing known and reasonably foreseeable risks, estimating and evaluating risks that may emerge when the system is used in accordance with its intended purpose, and adopting appropriate risk mitigation measures. Testing must verify that the system performs consistently and complies with requirements.

Agentic challenge: Agents create emergent risk through multi-step reasoning, tool chaining, and sub-agent delegation. Risk is not static—it evolves dynamically as the agent encounters novel situations. A risk assessment performed before deployment may not capture risks that emerge during autonomous operation.

THEMIS implementation: THEMIS provides continuous risk scoring through real-time policy evaluation. Every agent action is assessed against the risk management framework at the moment of execution—not periodically. Policy violation rates, anomaly detection alerts, and drift indicators are tracked via Prometheus/Grafana dashboards, providing the ongoing risk identification that Article 9(2)(b) requires. Ares (the AIOS security testing module) performs automated adversarial testing against agent workflows, identifying vulnerabilities through red-team simulation. Risk management records are stored in the Merkle-DAG evidence chain, creating a tamper-evident history of risk assessments and mitigation decisions that satisfies Article 9’s documentation requirements.

Article 10: Data and Data Governance

Requirement: Training, validation, and testing data sets must be subject to appropriate data governance practices. This includes ensuring data is relevant, sufficiently representative, and—to the best extent possible—free of errors and complete. Organizations must document data provenance, preprocessing methodology, and potential biases.

Agentic challenge: Agents access data dynamically through tools and APIs—not just through pre-curated training sets. A RAG-enabled agent retrieves documents at runtime; a tool-using agent queries databases, web services, and file systems. Data governance must extend to runtime data access, not just training data.

THEMIS implementation: THEMIS intercepts every data access operation and applies data classification gates. PII is automatically detected and redacted before it enters agent context windows. Mnemosyne (the AIOS memory and vector database module) tracks data lineage—recording what data sources were accessed, when, and how the data was used. All data access events are logged in the Merkle-DAG evidence chain with content hashes, enabling auditors to verify data governance compliance without accessing the underlying sensitive data. THEMIS’s tenant isolation ensures that data from different organizational units or clients is cryptographically separated, preventing cross-contamination that would violate data governance requirements.

Article 11: Technical Documentation

Requirement: Technical documentation must be drawn up before the system is placed on the market and kept up to date. Annex IV specifies required content: a general description of the AI system, detailed description of elements and development process, monitoring and functioning information, description of the risk management system, record of changes, and applicable standards. This documentation must enable authorities to assess the provider’s compliance.

Agentic challenge: Agentic systems are inherently dynamic. Agent behaviors, tool integrations, and prompt configurations change frequently. Traditional static documentation becomes stale within weeks. For organizations practicing agile development, retrospectively creating the comprehensive documentation required by Annex IV is one of the most challenging compliance tasks.

THEMIS implementation: THEMIS auto-generates compliance documentation from its operational data. Policy definitions are version-controlled, with every change recorded in the Merkle-DAG. Architecture descriptions, data flow diagrams, risk assessment records, and test results are maintained as living documents that update as the system evolves. The AIOS platform provides exportable compliance reports—mapped to Annex IV’s specific requirements—that can be generated on demand for regulatory review. Because THEMIS operates as middleware around the agent, it captures system behavior documentation that the agent framework itself does not provide.

Article 12: Record-Keeping

Requirement: High-risk AI systems must technically allow for the automatic recording of events (logs) over the lifetime of the system. Logs must enable identification of situations that may result in risk, support post-market monitoring, and facilitate operational oversight. Article 19 requires logs to be retained for at least six months. The draft standard ISO/IEC DIS 24970 provides implementation guidance, but it will not be finalized until late 2026.

Agentic challenge: Agentic systems generate vastly more events than conventional AI—each tool call, sub-agent delegation, reasoning step, and external API invocation is a recordable event. Logs must also be tamper-resistant, yet no harmonized technical standard specifies how tamper resistance should be implemented. The gap between the Act’s requirements and available implementation standards creates compliance uncertainty.

THEMIS implementation: THEMIS’s Merkle-DAG evidence chain is a purpose-built answer to Article 12. Every agent action generates a tamper-evident evidence node containing content hashes, policy evaluation results, caller identity, tenant ID, timestamp, and digital signature. The append-only structure guarantees completeness—any deleted record changes the root hash, making gaps mathematically detectable. External anchoring to independent witnesses provides third-party verification. Retention is configurable per tenant and per jurisdiction, with default six-month minimum satisfying Article 19. Crypto-shredding resolves the GDPR Article 17 tension by destroying encryption keys for personal data while preserving evidence chain integrity.

THEMIS’s Merkle-DAG evidence chain satisfies Article 12’s “automatic recording of events” requirement with mathematical tamper evidence—exceeding what the draft ISO/IEC 24970 standard is expected to require.

Article 13: Transparency and Provision of Information to Deployers

Requirement: High-risk AI systems must be designed and developed to ensure their operation is sufficiently transparent to enable deployers to interpret the system’s output and use it appropriately. Providers must supply instructions for use including the system’s intended purpose, level of accuracy and robustness, known limitations, and any circumstances that may lead to risks.

Agentic challenge: Agentic systems are less interpretable than conventional models because their outputs emerge from multi-step reasoning chains. A single response may incorporate tool outputs, sub-agent results, and multiple model calls. Explaining why the agent did what it did requires tracing the entire decision chain.

THEMIS implementation: THEMIS’s explainability hooks attach to each model call, logging the prompt-response pair with automated PII redaction. Thea (the AIOS quality assurance module) provides decision trace visualization—showing deployers the sequence of reasoning steps, tool invocations, and data accesses that led to any specific output. Zero-knowledge proofs enable deployers to verify that specific properties held (authorization, fairness, data classification) without accessing sensitive underlying data. Deployer dashboards provide real-time visibility into system accuracy, latency, policy violation rates, and cost—satisfying the “instructions for use” requirement with live operational data.

Article 14: Human Oversight

Requirement: High-risk AI systems must be designed and developed to allow effective human oversight during use. Human overseers must be able to understand the system’s capabilities and limitations, monitor its operation, detect anomalies, avoid automation bias, and intervene or interrupt the system via stop buttons, override mechanisms, or the ability to prevent outputs from taking effect.

Agentic challenge: Agentic systems operate at machine speed across multi-step workflows. By the time a human reviewer examines an agent’s action, the agent may have already executed dozens of subsequent steps. Human oversight for agentic systems requires architectural support—not just process guidelines.

THEMIS implementation: Hermes (the AIOS orchestration engine) enforces configurable human-in-the-loop checkpoints. For high-risk actions—defined by policy—agent execution pauses and routes to a human approver before proceeding. Kill switches halt all agent operations instantly, with the halt event recorded in the Merkle-DAG evidence chain. Override APIs allow authorized humans to modify agent behavior mid-workflow. Anomaly alerting (via Prometheus/Grafana) notifies human supervisors when agent behavior deviates from expected patterns. THEMIS’s policy enforcement enables “graduated autonomy”—agents can operate independently for low-risk actions while requiring human approval for high-risk decisions, implementing Article 14’s spirit of meaningful oversight without eliminating the efficiency benefits of agentic AI.

Article 15: Accuracy, Robustness, and Cybersecurity

Requirement: High-risk AI systems must achieve appropriate levels of accuracy, robustness, and cybersecurity throughout their lifecycle. This includes resilience against errors, faults, unauthorized access, adversarial attacks (data poisoning, model manipulation, prompt injection), and attempts to exploit system vulnerabilities.

Agentic challenge: Agents are uniquely vulnerable to prompt injection attacks—adversarial inputs embedded in tool outputs, documents, or user messages that hijack agent behavior. The OWASP Top 10 for Agentic Applications identifies tool misuse, prompt injection, and memory poisoning as top threats. Robustness for agentic systems means resilience under adversarial conditions, not just normal operation.

THEMIS implementation: Ares (the AIOS security testing module) performs continuous adversarial testing against deployed agents, simulating prompt injection, tool misuse, and data exfiltration attacks. Accuracy SLOs are defined and monitored in real-time—when accuracy drops below thresholds, THEMIS can automatically restrict agent capabilities or route to human oversight. Vault-based key management (HashiCorp Vault, AWS KMS, Azure Key Vault, GCP KMS) protects cryptographic keys with hardware-backed security. mTLS encrypts all agent-to-agent and agent-to-service communication. THEMIS’s policy enforcement provides an additional defense layer: even if an agent is compromised by prompt injection, the governance runtime blocks actions that violate policy—regardless of what the agent “wants” to do.

Operational Obligations: Articles 16–27

Beyond the technical requirements of Articles 9–15, the EU AI Act imposes operational obligations on providers (Article 16–22) and deployers (Article 26–27). THEMIS addresses the most critical of these.

Article 16: Provider Obligations

Providers must ensure ongoing compliance, maintain a quality management system (Article 17), keep documentation up to date (Article 18), retain automatically generated logs (Article 19), take corrective actions when non-compliance is identified (Article 20), and cooperate with competent authorities (Article 21). THEMIS’s continuous monitoring, auto-generated documentation, Merkle-DAG evidence retention, and exportable compliance reports directly support all six of these obligations.

Article 17: Quality Management System

Providers must establish a quality management system including policies for regulatory compliance, techniques for AI system design and development, quality management procedures, and resource management processes. THEMIS serves as the operational backbone of this QMS for agentic systems—enforcing policies at runtime, documenting system behavior automatically, and providing the auditable evidence that the QMS is functioning effectively.

Article 26: Deployer Obligations

Deployers must implement appropriate technical and organizational measures, assign competent human oversight, monitor the system in accordance with instructions for use, and maintain logs under their control. THEMIS’s deployer dashboards, human-in-the-loop enforcement, anomaly alerting, and tenant-specific evidence chains provide deployers with the tools they need to fulfill these obligations without building custom governance infrastructure.

Master Compliance Checklist: THEMIS Feature Mapping

The following table provides a comprehensive, item-by-item checklist mapping EU AI Act high-risk requirements to THEMIS capabilities. Use this as a compliance tracking tool for your organization.

Legend: ✅ = Fully addressed by THEMIS | ⚠️ = Partially addressed (org input required) | ⬜ = Organization’s responsibility

| Article | Requirement | THEMIS / AIOS Capability | Status |

|---|---|---|---|

| Art. 9 | Documented risk management system | Continuous risk scoring, policy violation tracking, Merkle-DAG audit trail of risk decisions | ✅ |

| Art. 9 | Identify and analyze foreseeable risks | Ares adversarial testing; anomaly detection; drift monitoring | ✅ |

| Art. 9 | Adopt risk mitigation measures | Policy enforcement blocks high-risk actions; graduated autonomy; kill switches | ✅ |

| Art. 9 | Testing for consistent performance | Ares automated testing; accuracy SLOs; regression detection | ✅ |

| Art. 9 | Define residual risk acceptance | Org defines risk thresholds; THEMIS enforces and documents them | ⚠️ |

| Art. 10 | Data governance practices | PII gates, data classification, tenant isolation, Mnemosyne lineage tracking | ✅ |

| Art. 10 | Training data relevance and quality | Org curates training data; THEMIS monitors runtime data access quality | ⚠️ |

| Art. 10 | Bias detection and mitigation | zk-SNARK fairness proofs; demographic parity monitoring | ✅ |

| Art. 10 | Document data provenance | Merkle-DAG records all data access with source hashes and timestamps | ✅ |

| Art. 11 | Technical documentation (Annex IV) | Auto-generated compliance reports; versioned policy docs; architecture export | ✅ |

| Art. 11 | System description and intended purpose | Org defines purpose; THEMIS documents runtime behavior against it | ⚠️ |

| Art. 11 | Keep documentation up to date | Living documents update as system evolves; Merkle-DAG version history | ✅ |

| Art. 12 | Automatic recording of events | Merkle-DAG evidence chain: every action, every agent, tamper-evident | ✅ |

| Art. 12 | Enable risk identification from logs | Policy violation alerts, anomaly detection, drift indicators in evidence stream | ✅ |

| Art. 12 | Support post-market monitoring | Continuous monitoring dashboards; exportable compliance data | ✅ |

| Art. 12 | Tamper-resistant log storage | Merkle-DAG + external anchoring; mathematically tamper-evident | ✅ |

| Art. 19 | Retain logs ≥ 6 months | Configurable retention per tenant/jurisdiction; default 6-month minimum | ✅ |

| Art. 19 | GDPR Art. 17 compatibility | Crypto-shredding: destroy PII encryption keys, preserve evidence integrity | ✅ |

| Art. 13 | Transparent operation for deployers | Explainability hooks, decision trace visualization (Thea), deployer dashboards | ✅ |

| Art. 13 | Instructions for use | Auto-generated capability docs, known limitations, accuracy/robustness metrics | ✅ |

| Art. 13 | Disclose accuracy and limitations | Real-time accuracy SLOs, confidence scores, error rate tracking | ✅ |

| Art. 14 | Allow effective human oversight | Configurable human-in-the-loop checkpoints (Hermes); approval workflows | ✅ |

| Art. 14 | Understand capabilities/limitations | Deployer dashboards with real-time performance and limitation visibility | ✅ |

| Art. 14 | Detect and correct anomalies | Prometheus/Grafana anomaly alerting; automated escalation to human oversight | ✅ |

| Art. 14 | Intervene and interrupt (stop button) | Kill switches; override APIs; immediate halt with Merkle-DAG evidence record | ✅ |

| Art. 14 | Avoid automation bias | Confidence calibration; mandatory human review for low-confidence outputs | ⚠️ |

| Art. 15 | Appropriate accuracy levels | Accuracy SLOs; continuous performance monitoring; regression detection | ✅ |

| Art. 15 | Robustness against errors/faults | Circuit breakers; graceful degradation; error compounding detection | ✅ |

| Art. 15 | Cybersecurity resilience | mTLS, vault key management, Ares adversarial testing, prompt injection defense | ✅ |

| Art. 15 | Resist adversarial attacks | Policy enforcement blocks unauthorized actions regardless of agent compromise | ✅ |

| Art. 16 | Ensure ongoing compliance | Continuous control monitoring; automated compliance status reporting | ✅ |

| Art. 17 | Quality management system | THEMIS as QMS operational backbone; policy enforcement + evidence | ✅ |

| Art. 20 | Corrective actions on non-compliance | Automated policy violation response; incident logging; escalation workflows | ✅ |

| Art. 26 | Deployer monitoring obligations | Tenant-specific dashboards, human oversight tools, evidence access | ✅ |

Organizational Readiness: What THEMIS Cannot Do For You

THEMIS addresses the majority of technical compliance requirements for agentic AI systems. However, several obligations require organizational action that no technology platform can fully automate:

AI system inventory and classification. Organizations must inventory every AI system they develop, deploy, or use, and classify each against Annex III categories. An appliedAI study of 106 enterprise AI systems found 18% clearly high-risk and 40% with unclear classification. This assessment requires legal and domain expertise.

Intended purpose definition. Article 6 and Annex III classification depend on the system’s “intended purpose.” Organizations must define and document this purpose formally. THEMIS can enforce policies against the stated purpose, but the purpose itself must be defined by the organization.

Conformity assessment. Prior to placing a high-risk system on the market, providers must complete a conformity assessment, prepare an EU declaration of conformity, and register the system in the EU database. THEMIS provides the evidence required for this assessment but does not perform the assessment itself.

Fundamental rights impact assessment. Article 27 requires deployers to conduct a fundamental rights impact assessment for certain high-risk systems. This is a legal and ethical analysis that requires human judgment, informed by THEMIS’s operational data.

Competent authority engagement. Organizations must identify and connect with relevant national competent authorities and participate in regulatory sandboxes where appropriate. THEMIS’s exportable compliance reports facilitate these interactions.

The Cost of Non-Compliance

The EU AI Act’s penalty structure is designed to be proportionate but severe:

Prohibited practices (Article 5): Up to €35 million or 7% of total worldwide annual turnover, whichever is higher. For context, 7% of global revenue would cost Meta approximately €8.5 billion and Microsoft approximately €16 billion.

High-risk system non-compliance (Articles 9–15): Up to €15 million or 3% of total worldwide annual turnover. Market surveillance authorities can also suspend or recall non-compliant AI systems from the EU market.

Incorrect or misleading information: Up to €7.5 million or 1.5% of total worldwide annual turnover.

Beyond fines, the reputational and operational costs of non-compliance are substantial. Market surveillance authorities can mandate corrective actions including model retraining, prohibit the placing of new systems until compliance is demonstrated, and order withdrawal of existing systems. For organizations whose agentic AI systems are central to their operations, forced withdrawal represents an existential business risk.

The AI governance platform market is projected to reach $492 million in 2026 spending alone. This is not discretionary investment—it is the cost of operating in the EU market with AI systems. THEMIS provides this governance as a runtime service, amortized across every agent action.

Conclusion: Compliance as Architecture, Not Afterthought

The EU AI Act’s August 2, 2026 deadline is five months away. Over half of organizations still lack a basic AI inventory. Only 18% have fully implemented AI governance frameworks despite 88% using AI operationally. The gap between regulatory requirements and organizational readiness is large and closing fast.

For organizations deploying agentic AI systems, the compliance challenge is compounded by the unique properties of autonomous agents: cascading actions, sub-agent delegation, dynamic tool integration, temporal complexity, and non-deterministic behavior. These properties make compliance more difficult—but they also make it more important. An ungoverned agent that makes a discriminatory credit decision, exposes patient health data, or executes an unauthorized financial transaction creates regulatory exposure that scales with the agent’s autonomy.

THEMIS provides compliance as architecture. Every agent action passes through four governance layers—identity verification, policy enforcement, evidence recording, and observability—before it executes. The result is an agentic AI system that is governed by design, not by hope. The Merkle-DAG evidence chain proves compliance mathematically. The zero-knowledge proofs verify policy adherence without revealing sensitive data. The human oversight mechanisms ensure that autonomy is bounded and auditable.

The compliance checklist accompanying this paper provides a concrete, actionable tool for tracking readiness. For every requirement where THEMIS provides the technical solution, the checklist shows it. For every requirement where organizational action is needed, the checklist is equally clear. The goal is not to obscure the effort required—it is to reduce it to the minimum necessary and ensure that no requirement is overlooked.

August 2, 2026 is not a deadline to fear. It is a deadline to prepare for. THEMIS provides the technical foundation. This checklist provides the roadmap.

Learn more at apotheon.ai | Request a demo of THEMIS | Download the compliance checklist

References

European Union (2024). Regulation (EU) 2024/1689 — The Artificial Intelligence Act. Official Journal, 13 June 2024.

EU AI Act, Article 6: Classification Rules for High-Risk AI Systems.

EU AI Act, Article 9: Risk Management System. Lifecycle risk identification, assessment, and mitigation.

EU AI Act, Article 10: Data and Data Governance. Training, validation, and testing data requirements.

EU AI Act, Article 11 & Annex IV: Technical Documentation. Comprehensive system documentation requirements.

EU AI Act, Article 12: Record-Keeping. Automatic event logging over system lifetime.

EU AI Act, Article 13: Transparency and Provision of Information to Deployers.

EU AI Act, Article 14: Human Oversight. Effective supervision capabilities.

EU AI Act, Article 15: Accuracy, Robustness and Cybersecurity.

EU AI Act, Articles 16–22: Obligations of Providers of High-Risk AI Systems.

EU AI Act, Article 19: Automatically Generated Logs. Minimum six-month retention.

EU AI Act, Article 26–27: Deployer Obligations and Fundamental Rights Impact Assessment.

EU AI Act, Annex III: High-Risk AI Systems Referred to in Article 6(2). Use case categories.

ISO/IEC DIS 24970 (2025–2026, draft). “Artificial Intelligence — AI System Logging.” CEN-CENELEC JTC 21.

AI2.Work (February 2026). “EU AI Act High-Risk Rules Hit August 2026: Your Compliance Countdown.”

SecurePrivacy (2026). “EU AI Act 2026 Compliance Guide: Key Requirements Explained.”

LegalNodes (February 2026). “EU AI Act 2026 Updates: Compliance Requirements and Business Risks.”

LittleData (February 2026). “EU AI Act Compliance Checklist: 7 Steps to Start Your Journey Today.”

Dataiku (February 2026). “EU AI Act High-Risk Requirements: What Companies Need to Know.”

DPO Consulting (2025). “High-Risk AI Systems Under the EU AI Act: Full Guide.”

appliedAI Institute (2025). Study of 106 enterprise AI systems: 18% clearly high-risk, 40% unclear classification.

VeritasChain Standards Organization (January 2026). “The EU AI Act’s Logging Requirements Are Clear. The Implementation Standards Are Not.”

VDE (2026). “EU AI Act: AI System Logging.” Analysis of Article 12 and ISO/IEC 24970.

Gartner (June 2025). “Predicts Over 40% of Agentic AI Projects Will Be Canceled by End of 2027.”

OWASP (2025). “Top 10 for Agentic Applications.” Agentic AI Security Initiative.

Apotheon.ai (2026). THEMIS: Trusted Hash-Based Evidence Management & Integrity System. Technical Documentation.

Apotheon.ai (2026). AIOS Platform: Hermes, Mnemosyne, Clio, Thea, Ares. Technical Documentation.

Download Complete Whitepaper PDF

Get the full technical analysis including architecture diagrams, competitive comparison table, complete references, and implementation guidelines.