HIPAA AI Agents in Healthcare Workflows

Compliance Architecture for Clinical AI Systems

Heath Emerson, MBA — Founder & CEO

February 2026 | apotheon.ai

Download Full PDF

Get the complete whitepaper with references and citations

Executive Summary

Healthcare organizations are deploying AI agents at unprecedented scale. Nearly 90% of healthcare leaders identify AI as critical for improving patient access and reducing clinician burnout. Yet adoption stalls at the pilot stage, and the barrier is not technology—it is trust. HIPAA remains the single largest compliance hurdle preventing healthcare organizations from moving AI initiatives into production. A single data breach can trigger federal fines reaching $2.1 million per violation, OCR enforcement actions, and irreparable reputational damage.

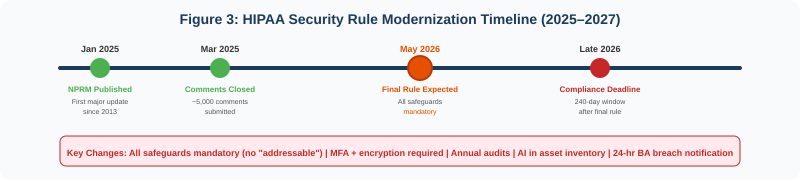

The regulatory landscape is tightening. In January 2025, HHS published the first major proposed update to the HIPAA Security Rule in over a decade, eliminating the distinction between “required” and “addressable” safeguards and making all implementation specifications mandatory. The final rule is expected in May 2026, with a 240-day compliance window that will close before the end of 2026. The proposed rule explicitly establishes that ePHI used in AI training data, prediction models, and algorithm data is protected by HIPAA, and requires regulated entities to inventory AI software that interacts with ePHI.

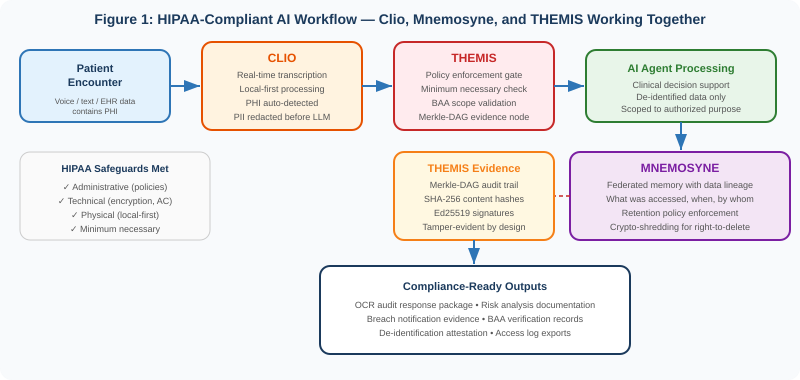

This paper describes how Apotheon.ai’s AIOS platform builds HIPAA-compliant AI workflows by integrating three purpose-built components: Clio for PHI-aware real-time transcription with automatic de-identification, Mnemosyne for federated AI memory with full data lineage and retention policy enforcement, and THEMIS for zero-trust governance with cryptographic audit trails. Together, these components create an architecture where PHI protection is structural—built into the data pipeline from the moment patient information enters the system to the moment it is archived or destroyed—rather than bolted on as an afterthought.

This paper maps the platform’s capabilities to HIPAA’s Privacy Rule, Security Rule, and the proposed 2026 updates. It includes an anonymized case study demonstrating how the workflow operates in a realistic clinical scenario. And it provides a practical readiness assessment for healthcare organizations, covered entities, and their business associates preparing for the new compliance landscape.

The 2026 HIPAA Compliance Landscape for AI

The Security Rule Is Being Rewritten

The HIPAA Security Rule, largely unchanged since 2003 and last meaningfully updated in the 2013 Omnibus Rule, is undergoing its most significant modernization. The Notice of Proposed Rulemaking published January 6, 2025 introduces changes that directly affect how healthcare organizations deploy AI systems.

The single most consequential change is the elimination of the “required” versus “addressable” distinction. Under the current rule, addressable safeguards allow organizations to consider cost and risk in their implementation decisions, with proper documentation. Under the proposed rule, all implementation specifications become mandatory with limited exceptions. For AI systems processing ePHI, this means encryption at rest and in transit, multi-factor authentication, and access controls are no longer optional considerations—they are baseline requirements.

Mandatory encryption: All ePHI must be encrypted at rest and in transit. AI systems that process patient data—whether for clinical decision support, documentation, or analytics—must use FIPS-validated encryption for every data flow.

MFA requirement: Multi-factor authentication is required for all systems accessing ePHI. AI agents that retrieve patient records must authenticate through MFA-protected channels.

AI asset inventory: Regulated entities must maintain a written inventory of technology assets, explicitly including AI software that creates, receives, maintains, transmits, or interacts with ePHI. Shadow AI—staff using unapproved tools like consumer ChatGPT with patient data—becomes a documentable compliance failure.

Annual compliance audits: Formal audits at least once every 12 months replace the more flexible assessment schedule. AI systems must be included in the audit scope.

Business associate verification: BAs must verify compliance with technical safeguards at least once every 12 months through written analysis by a subject matter expert. AI vendors processing PHI face heightened scrutiny.

24-hour breach notification: Business associates must notify covered entities of security incidents within 24 hours of activation of their contingency plans—significantly faster than the current 60-day window for breach notification to individuals.

The proposed HIPAA Security Rule explicitly states that ePHI in AI training data, prediction models, and algorithm data maintained by a regulated entity is protected by HIPAA. Every AI system that touches patient data is in scope.

HIPAA and AI: Where the Rules Already Apply

Even before the proposed updates take effect, HIPAA’s existing framework applies to AI systems in healthcare. The Privacy Rule’s minimum necessary standard requires that AI tools access only the PHI strictly necessary for their authorized purpose, even though AI models often seek comprehensive datasets to optimize performance. The Security Rule’s administrative, technical, and physical safeguards apply to any system that creates, receives, maintains, or transmits ePHI—including AI inference engines, training pipelines, and agent orchestration layers.

AI vendors that process PHI on behalf of covered entities are business associates under HIPAA and must execute Business Associate Agreements. The BAA must specify permissible uses of PHI, prohibit secondary use for AI model training unless explicitly authorized, mandate security protocols including encryption and access controls, require subcontractor compliance if the AI vendor uses third-party services, and include transparency clauses for AI decision-making in clinical contexts.

The penalties for non-compliance are substantial. Fines range from $141 to $2,134,831 per violation, with an annual cap of $2.1 million per violation category. Criminal penalties for knowing violations include imprisonment of one to ten years and fines from $50,000 to $250,000. In 2025, OCR levied more than $6.6 million in HIPAA fines, with the highest penalty of $3 million resulting from a phishing attack on a business associate. OCR’s third phase of compliance audits, announced in March 2025, is now underway and initially targets 50 covered entities and business associates.

The AI-Specific Risk Landscape

AI systems introduce compliance risks that traditional health IT does not. Generative AI tools may collect PHI in ways that raise unauthorized disclosure concerns, particularly when the tools were not designed with HIPAA safeguards. Black-box models complicate audits and make it difficult for privacy officers to validate how PHI is used. Consumer-grade AI tools like the free versions of ChatGPT, Claude, or Gemini generally retain user inputs to retrain models—if a clinician pastes a patient note into such a tool, that data may become part of the model’s permanent memory and could be surfaced to other users. This constitutes a willful disclosure of PHI with penalties reaching $50,000 per violation.

Agentic AI systems—where autonomous agents make decisions, invoke tools, and chain actions across multiple data sources—multiply these risks. A single clinical workflow might involve an agent accessing an EHR, retrieving lab results, consulting a drug interaction database, generating a clinical summary, and routing it to the appropriate provider. Each step in that chain is a potential PHI exposure point. Without governance architecture that enforces compliance at every step, the risk compounds with every action the agent takes.

Three Components, One Compliant Workflow

Apotheon’s approach to HIPAA-compliant AI workflows is to build compliance into the architecture itself, not into a compliance checklist reviewed after the system is built. Three platform components—Clio, Mnemosyne, and THEMIS—each address a distinct layer of the compliance challenge, and together they create an end-to-end pipeline where PHI is protected at every stage.

Clio: PHI-Aware Real-Time Transcription

Clio is Apotheon’s real-time speech transcription engine, designed with a local-first architecture that processes audio on-premises before any data leaves the organization’s boundary. In healthcare workflows, Clio serves as the entry point where unstructured clinical data—patient conversations, dictated notes, telehealth sessions—is converted to structured text. This is the point of highest PHI risk, because raw clinical speech contains every category of protected information: patient names, dates of birth, medical record numbers, diagnoses, treatment plans, and provider identities.

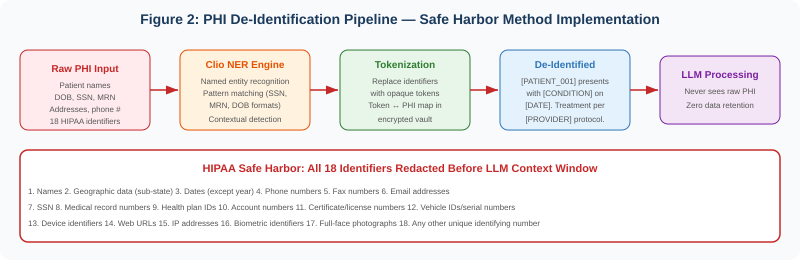

Clio addresses this risk through an integrated de-identification pipeline that operates before any text reaches an LLM context window. The pipeline implements HIPAA’s Safe Harbor method, which requires removal of 18 specific categories of identifiers. Clio’s Named Entity Recognition engine detects these identifiers through both pattern matching (for structured formats like SSNs, MRNs, and dates of birth) and contextual detection (for names, locations, and other identifiers that require natural language understanding). Detected identifiers are replaced with opaque tokens—[PATIENT_001], [DATE_001], [PROVIDER_001]—before the text enters any AI processing pipeline.

The token-to-PHI mapping is stored in an encrypted vault managed by THEMIS, accessible only to authorized re-identification processes. This architecture means the LLM never processes raw PHI. If the AI model’s outputs are intercepted, leaked, or retained, they contain no patient-identifying information. The de-identification happens at the edge, before data enters the broader system, and the re-identification capability is controlled by policy and audited by the governance layer.

Local-first processing: Audio transcription occurs on-premises. No raw audio is transmitted to cloud services. This eliminates an entire category of PHI exposure—the audio itself never leaves the organization’s physical boundary.

Sub-2-second latency: Real-time transcription with de-identification operates with less than two seconds of latency, enabling use in live clinical workflows including telehealth sessions and bedside documentation.

Safe Harbor compliance: All 18 HIPAA-defined identifiers are detected and tokenized. The system maintains a configurable sensitivity threshold—organizations can choose to err on the side of over-redaction in high-sensitivity contexts.

Mnemosyne: Federated Memory with Data Lineage

In healthcare AI workflows, the question is not just whether PHI is protected in the moment of processing—it is whether the organization can demonstrate, retrospectively, exactly what data was accessed, when, by whom, and for what purpose. This is the domain of Mnemosyne, Apotheon’s federated AI memory service.

Mnemosyne provides tiered storage with full data lineage tracking. Every piece of data that enters the memory system—whether from Clio’s transcription pipeline, an EHR integration, or an agent’s intermediate reasoning—is tagged with provenance metadata: the source system, the time of access, the requesting agent’s identity, the authorization policy that permitted access, and the purpose for which the data was accessed. This lineage record persists for the full retention period and is itself stored as a Merkle-DAG node in THEMIS’s evidence chain.

Minimum necessary enforcement: Mnemosyne enforces HIPAA’s minimum necessary standard at the memory layer. Agents can only access the specific data elements their authorized purpose requires. A clinical summarization agent, for example, can access progress notes and lab results but not billing records or insurance information—even if those records exist in the same patient context.

Retention policy enforcement: HIPAA requires covered entities to retain documentation for six years from the date of creation or the date when it was last in effect, whichever is later. Many states impose longer retention periods. Mnemosyne enforces retention policies automatically, with configurable rules per data type and jurisdiction. When retention periods expire, data is either archived or destroyed per policy—with the destruction event itself recorded in the evidence chain.

Crypto-shredding: When data must be destroyed—whether due to retention policy expiration or a patient’s request—Mnemosyne uses crypto-shredding. Rather than attempting to locate and delete every copy of a data element across distributed storage, the system destroys the encryption keys that protect the data. The encrypted data remains in storage but is mathematically irrecoverable without the keys. The Merkle-DAG evidence chain records that the keys were destroyed, when, and under what authorization—preserving the audit trail while making the underlying data permanently inaccessible.

Federated architecture: In multi-site healthcare deployments—hospital systems, research networks, accountable care organizations—Mnemosyne’s federated architecture keeps data at its source while enabling authorized cross-site AI workflows. PHI does not need to be centralized for AI agents to operate across organizational boundaries. Each site maintains sovereignty over its data while participating in governed federated workflows.

THEMIS: Zero-Trust Governance Runtime

THEMIS is the governance layer that ties everything together. Every action in the workflow—Clio’s transcription, Mnemosyne’s data access, the AI agent’s processing—passes through THEMIS’s policy enforcement gate. THEMIS verifies the identity of the requesting agent, evaluates the action against the applicable policies (HIPAA minimum necessary, BAA scope, organizational access controls), and generates a tamper-evident evidence node in the Merkle-DAG before permitting the action to proceed.

Policy enforcement per action: Every agent action is evaluated against policy before execution. There is no “trusted” zone where actions bypass governance. This implements the zero-trust principle that is central to both the current and proposed HIPAA Security Rule.

Merkle-DAG audit trail: Every governance decision generates a cryptographically signed evidence node. The Merkle-DAG structure means that altering any node changes the root hash—making tampering mathematically detectable. This directly supports HIPAA’s audit and accountability requirements and provides the evidence base for OCR investigations.

BAA scope enforcement: THEMIS enforces the boundaries defined in Business Associate Agreements at the technical layer. If a BAA permits a vendor to process PHI for clinical decision support but not for research analytics, THEMIS blocks any agent action that falls outside the permitted scope—regardless of what the agent’s instructions request.

Breach detection and response: THEMIS’s continuous monitoring detects anomalous access patterns—unusual volume of PHI access, access outside authorized hours, access to records outside the user’s care team—and triggers automated alerts. Under the proposed 24-hour notification requirement, the speed of detection directly affects compliance.

HIPAA Safeguard Mapping

The following table maps Apotheon’s platform capabilities to the specific HIPAA safeguards most relevant to AI deployments, covering both current requirements and proposed 2026 updates.

| HIPAA Safeguard | Requirement | Platform Component | Capability |

|---|---|---|---|

| §164.312(a) — Access Control | Unique user ID, emergency access, auto logoff, encryption/decryption | THEMIS | RBAC/ABAC per agent action, mTLS identity, session-scoped tokens |

| §164.312(b) — Audit Controls | Record and examine activity in systems containing ePHI | THEMIS + Mnemosyne | Merkle-DAG evidence chain, data lineage tracking, tamper-evident logs |

| §164.312(c) — Integrity Controls | Protect ePHI from improper alteration or destruction | THEMIS | SHA-256 content hashing, append-only evidence store, crypto-shredding |

| §164.312(d) — Authentication | Verify identity of person/entity seeking access | THEMIS | X.509 certificates, HSM-backed identity, MFA enforcement |

| §164.312(e) — Transmission Security | Guard against unauthorized access during transmission | THEMIS + Clio | mTLS encryption, FIPS 140-2/3 crypto, local-first processing |

| §164.308(a)(1) — Risk Analysis | Accurate and thorough assessment of risks to ePHI | THEMIS (Ares) | Continuous risk scoring, adversarial testing, drift detection |

| §164.308(a)(4) — Information Access | Policies for authorizing access to ePHI | THEMIS + Mnemosyne | Minimum necessary enforcement, purpose-scoped access policies |

| §164.308(a)(5) — Security Awareness | Security reminders, login monitoring, password management | THEMIS | Automated anomaly alerts, failed auth tracking, policy violation dashboards |

| §164.502(b) — Minimum Necessary | Limit PHI use/disclosure to minimum necessary for purpose | Clio + Mnemosyne | Pre-LLM de-identification, field-level access controls, purpose-scoped queries |

| §164.514 — De-Identification | Safe Harbor (18 identifiers) or Expert Determination | Clio | NER engine, Safe Harbor pipeline, configurable sensitivity thresholds |

| Proposed: AI Asset Inventory | Written inventory of AI software interacting with ePHI | THEMIS | Automated agent registry, capability mapping, version tracking |

| Proposed: Annual Audit | Formal compliance audit every 12 months | THEMIS | Auto-generated compliance reports, exportable evidence bundles |

| Proposed: BA Verification | Annual written analysis of BA technical safeguards | THEMIS | Continuous control monitoring, BA scope enforcement, signed attestations |

Case Study: AI-Assisted Clinical Documentation (Anonymized)

The following scenario illustrates how Clio, Mnemosyne, and THEMIS work together in a realistic clinical workflow. All patient information is fictitious. The scenario is constructed to demonstrate the compliance controls that activate at each stage of the workflow.

Scenario: Post-Operative Follow-Up Visit

A patient arrives for a post-operative follow-up at a multi-site hospital system. The attending physician uses an AI-powered clinical documentation assistant to streamline the encounter. The system must generate a clinical summary, update the patient’s care plan, and flag any potential complications—all while maintaining HIPAA compliance at every step.

THEMIS Evidence Log — Session TS-2026-0219-4837

TIMESTAMP: 2026-02-19T09:14:22Z

EVENT: session.start | AGENT: clinical-doc-assistant-v3.2

PROVIDER: [PROVIDER_0041] | FACILITY: [FACILITY_012] | DEPT: Orthopedics

POLICY: hipaa-minimum-necessary-v2 | BAA: ba-2025-0847-ehr-vendor

09:14:22 — Clio begins transcription of patient encounter. Audio processed

locally. Raw transcript contains PHI across 7 identifier categories.

[09:14:22] CLIO DE-IDENTIFICATION REPORT:

Identifiers detected: 14

─ Patient name: 2 instances → [PATIENT_0293]

─ Date of birth: 1 instance → [DOB_0293]

─ Medical record number: 1 instance → [MRN_0293]

─ Dates (procedure/visit): 4 instances → [DATE_001–004]

─ Provider names: 3 instances → [PROVIDER_0041], [PROVIDER_0089],

[PROVIDER_0112]

─ Facility name: 2 instances → [FACILITY_012]

─ Phone number: 1 instance → [PHONE_0293]

Safe Harbor compliance: PASS (18/18 identifier categories scanned)

Token map encrypted and stored in vault (key: km-2026-02-0293)

09:14:23 — De-identified transcript sent to clinical documentation agent.

THEMIS evaluates access request against minimum necessary policy.

[09:14:23] THEMIS POLICY EVALUATION:

Agent: clinical-doc-assistant-v3.2

Requested data: progress notes, surgical report, lab results, vitals

Policy: hipaa-minimum-necessary-v2

Evaluation: PERMIT (all requested data within authorized scope)

Denied fields: billing records, insurance info, SSN (outside scope)

Evidence node: dag-node-9f3a7b2e (SHA-256: 7c4d...a91f)

[09:14:23] MNEMOSYNE DATA ACCESS:

Source: EHR integration (hl7-fhir-r4)

Records accessed: 4 (progress note, op report, CBC, vitals)

Lineage ID: ln-2026-02-0293-004

Retention policy: 10 years (state requirement, orthopedic surgery)

Access logged: Mnemosyne lineage record → THEMIS evidence chain

09:14:25 — Agent generates clinical summary from de-identified data.

Summary contains no raw PHI. Clinical content preserved.

[09:14:25] AGENT OUTPUT (de-identified excerpt):

"[PATIENT_0293] presents for 6-week post-operative follow-up

following right total knee arthroplasty performed on [DATE_001]

by [PROVIDER_0089]. Patient reports improved range of motion

(0–115 degrees, up from 0–90 at 2-week visit). Wound healing

well, no signs of infection. CBC from [DATE_003] within normal

limits. Recommend continued physical therapy 3x/week, follow-up

in 6 weeks with [PROVIDER_0041]. No complications flagged."

09:14:26 — Re-identification for EHR integration. THEMIS verifies

authorization. Token map decrypted. PHI restored for clinical record.

[09:14:26] RE-IDENTIFICATION AUTHORIZATION:

Requestor: ehr-integration-service

Purpose: clinical documentation (write-back to patient chart)

THEMIS evaluation: PERMIT (authorized purpose, authenticated service)

Vault key decrypted: km-2026-02-0293

Re-identified summary written to EHR via FHIR DocumentReference

Evidence node: dag-node-b2c8e4f1 (SHA-256: 3e8f...d72c)

[09:14:27] SESSION SUMMARY:

Duration: 5.1 seconds (transcription through EHR write-back)

PHI exposure to LLM: ZERO (all processing on de-identified data)

Policy evaluations: 4 (all PERMIT within authorized scope)

Denied requests: 1 (billing records outside minimum necessary)

Evidence nodes generated: 6 (full chain from intake to write-back)

Merkle-DAG root updated: root-2026-02-19-09h (verified)

This evidence log demonstrates several critical compliance properties. First, the LLM never processes raw PHI—the de-identification pipeline strips all 18 HIPAA identifier categories before any data enters the AI context window. Second, the minimum necessary standard is enforced technically, not just procedurally: the agent’s request for billing records was denied because it fell outside the authorized scope, even though the patient’s billing data exists in the EHR. Third, every access, decision, and output is recorded in the Merkle-DAG evidence chain with cryptographic signatures—creating a tamper-evident audit trail that an OCR investigator can verify independently. Fourth, the entire workflow completes in five seconds, demonstrating that compliance does not require sacrificing clinical efficiency.

In this workflow, the AI agent generated a clinically useful summary without ever seeing a patient’s name, date of birth, or medical record number. The clinical content—the diagnosis, the measurements, the treatment plan—flowed through the system intact. Only the identifying information was protected. Compliance and utility are not in tension.

Business Associate Agreements for AI Vendors

Any AI vendor that processes PHI on behalf of a covered entity is a business associate under HIPAA and must execute a BAA. With the proposed Security Rule updates, BAAs for AI vendors require enhanced provisions that traditional BAAs do not address. Apotheon’s platform architecture supports these provisions at the technical layer.

Prohibition on model training: The BAA must explicitly prohibit the use of PHI for AI model training unless separately authorized. THEMIS enforces this by blocking any agent action that attempts to write PHI to a training pipeline—the policy evaluation occurs before the action executes, not after.

Zero data retention: Consumer AI tools that retain user inputs for model improvement are incompatible with HIPAA. Apotheon’s architecture processes de-identified data ephemerally—once the agent returns its output, the de-identified context is not retained unless the organization’s retention policy explicitly requires it.

Subcontractor compliance: If Apotheon’s platform integrates with third-party LLM providers, those providers are subcontractors of the business associate. THEMIS’s gateway architecture ensures that no PHI reaches subcontractor services—only de-identified, tokenized data crosses the boundary. The subcontractor never becomes a business associate with respect to PHI because it never processes PHI.

Breach notification: Under the proposed 24-hour notification requirement, THEMIS’s automated breach detection and alerting ensures that anomalous access patterns trigger immediate notification workflows. The evidence chain provides the forensic detail needed for breach investigation and OCR reporting.

Implementation Guidance: Building Your First Compliant Workflow

Step 1: Inventory and Classify

Before deploying AI agents, inventory every system that currently processes ePHI. Under the proposed rule, this inventory must include AI software. Map data flows: where does PHI enter, how does it move through systems, where is it stored, and when is it destroyed? This inventory becomes the foundation for configuring THEMIS policies and Mnemosyne retention rules.

Step 2: Establish the De-Identification Boundary

Deploy Clio at every point where unstructured PHI enters the AI workflow—transcription of clinical encounters, ingestion of clinical documents, processing of patient communications. Configure the Safe Harbor pipeline sensitivity thresholds. Validate that all 18 identifier categories are being detected and tokenized before any data reaches AI processing.

Step 3: Configure Minimum Necessary Policies

For each AI agent in the workflow, define the specific data elements it is authorized to access. Configure these as THEMIS policies. A clinical summarization agent needs progress notes and lab results; it does not need billing records. A scheduling agent needs appointment data and provider availability; it does not need clinical notes. The minimum necessary standard is enforced per agent, per action, per data element.

Step 4: Establish Retention and Destruction Policies

Configure Mnemosyne retention policies per data type and jurisdiction. Federal HIPAA requires six-year retention for documentation; many states require longer. Configure crypto-shredding procedures for end-of-retention destruction. Test the destruction process and verify that the evidence chain properly records the event.

Step 5: Activate Continuous Monitoring

Enable THEMIS’s continuous monitoring: anomalous access detection, policy violation alerting, and compliance dashboard reporting. Configure alert thresholds for the proposed 24-hour notification timeline. Run a tabletop exercise to validate that the notification workflow activates correctly when a simulated breach is detected.

Step 6: Generate Audit Artifacts

Before the first patient encounter, generate a compliance report from THEMIS to verify that all controls are active and properly configured. This report—combined with the organization’s policies and procedures documentation—forms the basis for the annual compliance audit required under the proposed rule.

Conclusion: Compliance as Architecture

HIPAA compliance for AI in healthcare is not a checkbox exercise. The proposed 2026 Security Rule updates make this explicit: all safeguards are mandatory, AI assets must be inventoried, and the distinction between “good enough” and “required” is eliminated. For healthcare organizations deploying AI agents, this means that compliance must be built into the architecture of the AI system itself—not layered on afterward through policy documents and training programs.

Apotheon’s three-component approach—Clio for PHI-aware transcription with automatic de-identification, Mnemosyne for federated memory with data lineage and retention enforcement, and THEMIS for zero-trust governance with cryptographic audit trails—creates a workflow where HIPAA compliance is structural. The LLM never sees raw PHI. The minimum necessary standard is enforced technically, not just procedurally. Every access, decision, and output is recorded in a tamper-evident evidence chain. And when OCR comes knocking—or when the annual compliance audit arrives—the evidence is already there, cryptographically signed and ready for review.

The healthcare organizations that will succeed with AI in 2026 are not the ones that deploy the most powerful models. They are the ones that deploy AI with governance architectures that make compliance inevitable rather than aspirational. That is what Clio, Mnemosyne, and THEMIS deliver together: compliant healthcare AI workflows where patient data protection is not a constraint on innovation but a built-in property of the system itself.

Learn more at apotheon.ai | Request a healthcare deployment assessment | Contact: info@apotheon.ai

References

HHS (January 2025). “HIPAA Security Rule To Strengthen the Cybersecurity of Electronic Protected Health Information.” Proposed Rule, Federal Register 2024-30983.

HHS OCR (December 2024). “HIPAA Security Rule NPRM Fact Sheet.” hhs.gov/hipaa/for-professionals/security.

RubinBrown (February 2026). “HIPAA Security Rule Changes: 2025 & 2026 HIPAA Updates.” Final rule expected May 2026.

Healthcare Law Insights (February 2026). “Major HIPAA Security Rule Changes on the Horizon: Is Your Healthcare Organization Ready?”

JTS Health Partners (October 2025). “2026 Proposed Rule Changes Regarding Cybersecurity of Electronic Protected Health Information.”

Chess Health Solutions (November 2025). “2026 HIPAA Rule Updates: What Healthcare Providers, Administrators, and Compliance Officers Need to Know.”

HIPAA Journal (January 2026). “HIPAA Updates and HIPAA Changes in 2026.” OCR third-phase compliance audits underway.

HIPAA Journal (January 2026). “New HIPAA Regulations in 2026.” Privacy Rule changes, de-identification standards, personal health applications.

Maynard Nexsen (January 2025). “Changes Proposed by HHS to Strengthen HIPAA Security Rule.” Patch management, access control requirements.

HIPAA Vault (October 2025). “HIPAA Security Rule: Prepare for Compliance Changes in Q4 2025.” Implementation guidance and checklist.

Foley & Lardner (May 2025). “HIPAA Compliance for AI in Digital Health: What Privacy Officers Need to Know.” BAA guidance, minimum necessary, de-identification.

HIPAA Journal / Mayover (May 2025). “When AI Technology and HIPAA Collide.” NIST AI RMF alignment, business associate AI risks.

Aisera (January 2026). “7 Best HIPAA Compliant AI Tools and Agents for Healthcare (2026).” Shadow AI risks, BAA framework, vendor evaluation.

PMC / NIH (2024). “AI Chatbots and Challenges of HIPAA Compliance for AI Developers and Vendors.” Business associate classification, Privacy Rule scope.

Censinet (December 2025). “The Future of HIPAA Audits: Are You Ready for AI, APIs, and Automation?” Continuous compliance, shortened timelines, Gartner predictions.

SPRY (December 2025). “HIPAA Compliance AI in 2025: Critical Security Requirements You Can’t Ignore.” BAA enhancement, vendor oversight, fourth-party risk.

Maher Duessel CPAs (2025). “Update Your Business Associate Agreement for AI.” BAA AI-specific clauses, penalty tiers, subcontractor compliance.

Amundsen Davis (2025). “AI in Health Care: What Privacy Officers Need to Know to Remain HIPAA Compliant.” State AI legislation, proposed rule analysis.

Arkenea (January 2026). “Is OpenAI HIPAA Compliant?” Zero data retention API, BAA process, consumer product exclusions.

HIPAA Vault (May 2025). “Does AI Comply with HIPAA? Understanding the Key Rules.” Infrastructure requirements, training data de-identification.

Apotheon.ai (2026). Clio: PHI-Aware Real-Time Transcription Engine. Technical Documentation.

Apotheon.ai (2026). Mnemosyne: Federated AI Memory Service with Data Lineage. Technical Documentation.

Apotheon.ai (2026). THEMIS: Trusted Hash-Based Evidence Management & Integrity System. Technical Documentation.

Download Complete Whitepaper PDF

Get the full technical analysis including architecture diagrams, competitive comparison table, complete references, and implementation guidelines.